I conducted another assessment of the APIs available across the federal government this weekend. It is work I enjoy because I always learn so much while doing it. I learn about government agencies and what they do, but also find some very interesting resources available via the API and developer portals that exist across different agencies. There is a wealth of data available across these government agencies, with more than half of the 150+ agencies possessing APIs, and almost fifty of them having a dedicated portal where they aggregate APIs across the agency. While I learn a number of different things, I’d say the most important thing I walk away from is always the renewed energy I need to keep advocating for API investment at the federal level.

A Lack of Investment

As I look through all of the different developer portals and API from federal agencies I am regularly concerned with the lack of investment in doing APIs well. I know from experience that this is the number one thing holding back value generated by APIs. Poorly documented, complex designs, and other common challenges plague federal government APIs. This isn’t something exclusive to just government APIs, and is something that exists across the private sector, but it is uniquely a challenge in government because of the way things are funded, sustained, and how there is turnover according to election cycles. Despite all my concern around the proper investment of government APIs, I am always left with enough inspiration to keep learning and evangelizing.

Rich Resources Available

I can spend days looking through all of the APIs available, but I have to spend my time wisely as part of this audit. I am not looking to go deep, but spend time assessing the APIs across 150+ agencies. But after looking through all of the APIs, as well as the data portals that exist alongside, or instead of APIs, I am left in awe of the rich resources that are available. You can tell this data is powering industry, and those who have the resources and are in the know are making use of the data, but it is something that would be significantly greater if everything was available via simpler, more modern APIs. You see a lot of data locked up in spreadsheets and proprietary visualization tooling, but in some cases you do come across data available as simple web APIs. There is a massive opportunity here for the federal government to take the lead on establishing standards, and powering industry with this rich wealth of resources produced regularly.

Simple and Machine Readable

85% of the data available via federal government APIs and data portals require domain knowledge and compute and other tooling resources to process the data and put it to work. While engaging in policy discussions in D.C. I hear a lot about transparency and accessibility of data resources, but I don’t always hear what is needed when it comes to the simplicity and machine readability required to work with data at web scale across many different companies and industries.The data being made available is rich in value, but only within the hands of a limited group who have the skills and resources. Making it available as simple APIs, and machine readable by default, would significantly widen who can use the data and how it can be applied. This is something that would increase the value generated from the data, and thus expand the investment in making the data available in simple and machine readable ways.

Benefits of Developer Portals

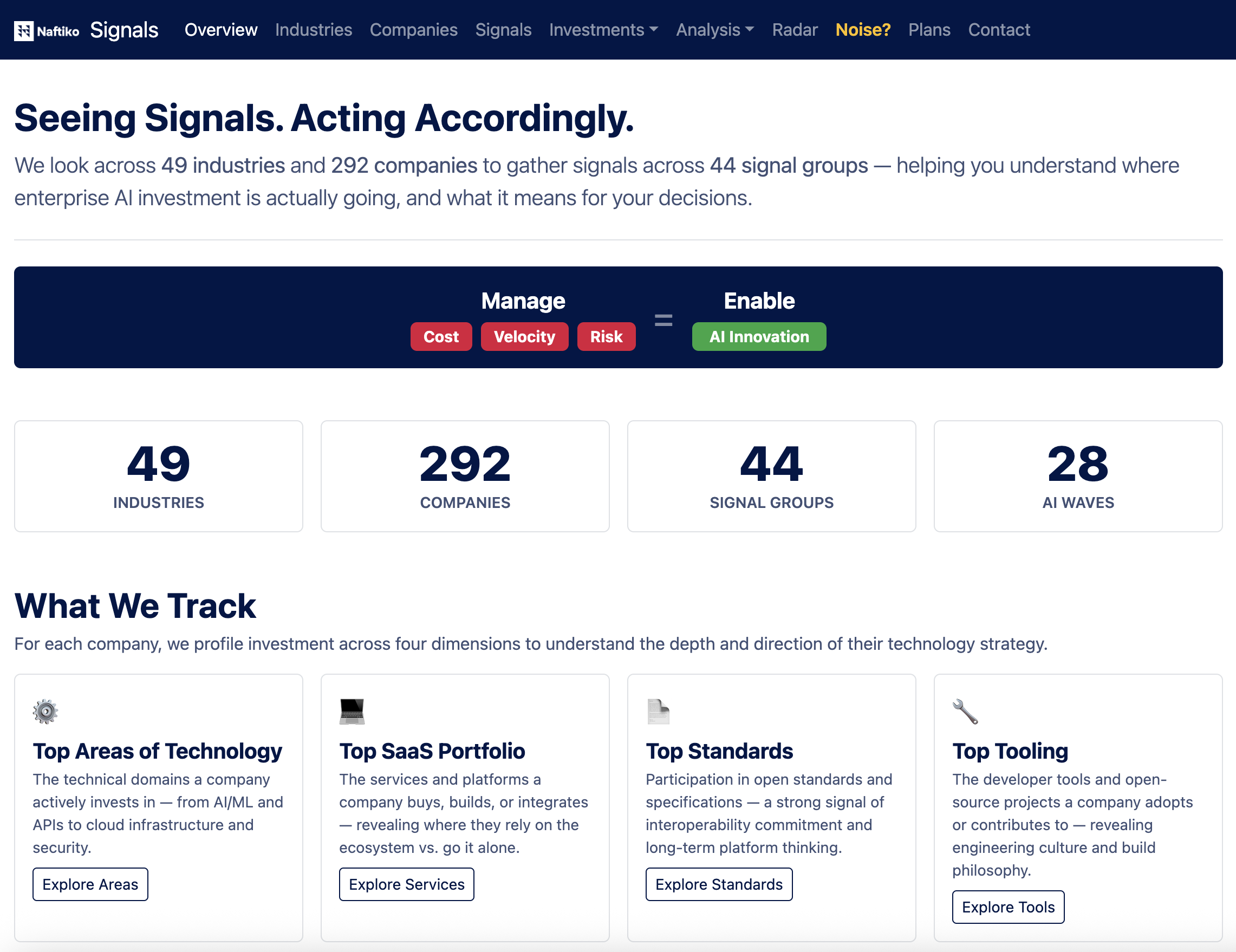

The 49 federal agencies who have some sort of centralized and aggregated portal for developers are clearly ahead of the game than those who have ad hoc APIs or even just centralized data portals. While many of them are sparse and lacking many of the things we take for granted across API portals in the private sector, they show a commitment to doing APIs and the importance of aggregating APIs across an agency, providing a single place to discover resources. Developer portals allow for APIs and other resources to be aggregated, making it easier for the private sector, but also public sector consumers to find what they need. In my opinion, every federal agency should be required to have both a developer and a data portal, because it is clear that those who do are seeing more value generation occurring across their digital resources.

Moving Forward with APIs

I want to keep evangelizing and incentivizing federal agencies to do APIs. I support the GSA continuing to provide guidelines, templates, and other resources that government agencies can use when delivering APIs, developer portals, and other building blocks of API operations. However, I am not convinced that discovery, sustainment, aggregation, and other aspects of delivering reliable APIs at scale should be occurring within agencies. I just don’t believe that government operates in a way that will get the most value out of the API lifecycle, and continuing to treat them as a checkbox on a project list, rather than the living ongoing digital resource, capabilities, and experiences necessary to realize their full potential. The federal government just isn’t setup to maximize the feedback loop associated with each API version, and treating APIs as a product. There are too many historical barriers in place that prevents the nutrients between API producer and consumer to flow, and I believe that a private sector layer is required to move things forward when it comes to federal government APIs.

Automating API Discovery

I have done several waves of these assessments of federal government APIs now. Due to constraints in time and resources I tend to limit these to what I can do manually and in a narrative way within 1-2 days. I am feeling like I am going to have to establish a more automated way to manage my ongoing assessment and monitoring of federal government APIs, and maybe even fire up my Adopta.Agency work, and begin adopting more datasets. I noticed that the Department of Education took down their centralized API portal, taking down several valuable APIs along with it. I really don’t have any automated way to tell me what other APIs were deprecated from audit to audit. If I don’t remember, it will take me even more work to compare each round. I have seen many efforts to index government agencies come and go. Some are done within government efforts, and others outside as part of private sector work. Nothing has stuck. I am feeling like I will have to reboot my APIs.json index for each agency and develop a more sophisticated approach to auditing, monitoring, and understanding which APIs come and go. Then maybe along the way begin investing in my vision of caching the most valuable APIs and data I find across my work.

The Work Never Ends

I’ve seen enough investment in new APIs, and clear usage of existing government APIs to maintain hope. For some reason, seeing important APIs go away, and continuing to see new data sets emerge that would realize more meaningful applications if there were an API, actually gives me hope. For some reason this energizes me each round to do a little more work. I think the challenge is that I need a stronger foundation for my work, and build into the process that my energy and time for this work will come and go, but allowing me to come back from time to time and move things forward without losing momentum. My challenge is I need to figure out if I do this as a Postman or API Evangelist project. I am thinking that there is more forgiveness for sporadic investment in this when done under the API Evangelist brand, where there is more expectation for continued investment by Postman. I am thinking I will use my new Jekyll site template for automating and programmatically managing my auditing of federal government APIs using APIs.json, while establishing a plan for identifying APIs that go away, allowing for the caching of APIs outside of government, and even the development of new APIs from government data—keeping Adopta.Agency alive in a more ongoing way.